( ESNUG 533 Item 2 ) -------------------------------------------- [10/15/13]

Subject: User runs new BDA ACE characterization with Cadence IC6 ADE-L

> The three main factors that convinced us to move to BDA ACE were:

>

> - ability to thoroughly characterize any complex analog or RF circuit

> - effective performance vs. specification reporting tool

> - ease of use shortening the overall setup time

>

> - http://www.deepchip.com/items/dac13-04.html

From: [ Mr. Happy ]

Hi, John,

Anon pls.

Using that new BDA ACE characterization tool with Cadence ADE-L shortened

our 28 nm ADC design cycle down to 20 weeks.

I'm an analog design director, and have been working on analog designs for

over 15 years, spanning design processes from 180 nm to 28 nm these days.

My team develops high performance low-power giga-sample/second ADCs, DACs

and high-speed SERDES in 28 nm CMOS technologies, for mobile communication

and instrumentation applications. Thus, our ADCs and DACs need to achieve

SFDR levels of better than 105 dB FS.

Due to these extremely tight accuracy requirements, it is mandatory for us

to fully understand the impact of process variations, even though the data

converters we use apply calibration techniques to overcome some of the

process-related shortcomings. Our characterization overhead is tremendous

compared to 40 nm CMOS due to the increased process spread in 28 nm CMOS.

CADENCE IC5/IC6 and ADE:

In the past, my team and I used Cadence IC5. Within it, I could do design

and characterization across lots of corners plus do Monte Carlo without

needing other tools or even going outside of ADE.

But this new Cadence IC6 with ADE-L only allows users to do basic circuit

performance verification.

- Setting up corner and Monte Carlo simulations is extremely

complex inside ADE-XL and not intuitive.

- Lots of commonly used simulator functions are hidden in sub-menus.

The visibility of the entire characterization setup is very limited and

requires lots of setup time and experience.

---- ---- ---- ---- ---- ----

BERKELY-DA ACE EVALUATION:

Our first priority was that ACE had to enable my team and me to thoroughly

characterize the circuits as well as provide us with a clear specification

PASS and FAIL condition such that the designer is able to modify/improve

the circuit accordingly.

Our other evaluation criteria were: ease of use, good visibility into the

entire characterization setup, short setup time and integration into our

existing Cadence design flow.

For a holistic assessment, we used different types of circuits to verify ACE

across diverse sets of specs as well as modes of operation.

- We applied sampled circuits like Switched-Capacitor Circuits

(SC-Circuits) to verify the microvolt (uV) noise and settling

requirements across corners and Monte Carlo.

- A programmable gain amplifier (PGA) was chosen to analyze small

signal and stability parameters like phase margin and gain

bandwidth.

- We used a voltage reference circuit with a bandgap to exactly

analyze process variations and yield.

My team started to use ACE after only 1 hour of introductory training!

On the first day, my entire team successfully applied all the ACE tools such

as its distribution analyzer, automated performance-vs.-spec reporting, and

sophisticated corner-and-parameter SPICE simulation loop nesting.

After this eval, we decided to incorporate ACE into our existing Cadence

28 nm design flow for characterizing our ADC's and DAC's.

---- ---- ---- ---- ---- ----

ACE CHARACTERIZATION FEATURES:

Below is my assessment of ACE's characterization features:

1. The drag-and-drop paradigm for creating a suite of simulation

experiments.

This is great because it's intuitive on how to build your set of experiments

quickly. We like to do this in ACE because it's much easier and much faster

than with Cadence ADE-L, ADE-XL, or Synopsys Custom Designer.

With the drag-and-drop, we are able to create arbitrary nesting so we can

make sure we structure our characterization experiments exactly as we want

and not be forced into a 'tool template'. Compared to 40 nm CMOS, nesting

becomes even more important in 28 nm with its process spread, temperature

and power supply sensitivity, and mismatch problems.

ACE also automatically post-processes all of its results, saving me the pain

of having to write many scripts.

2. ACE's characterization console

The ACE console lets me take a systematic view of my jobs, letting me decide

what experiments to launch, and it monitors the progress of the experiments

in real-time, and assesses how I am doing against my characterization plan.

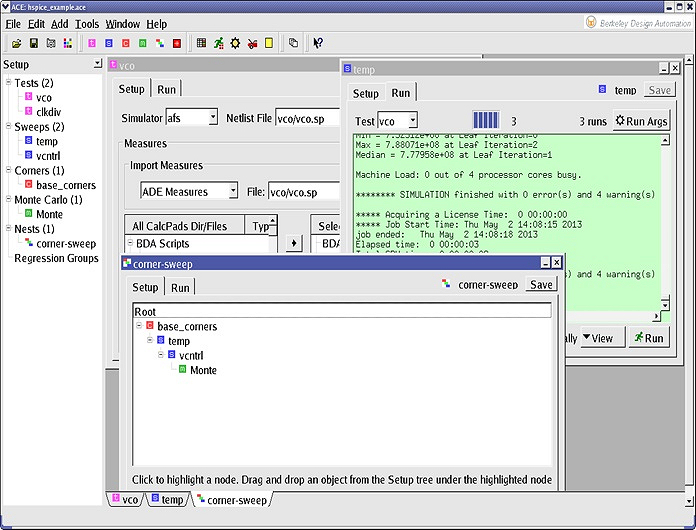

Fig 1. ACE Console for setup and launching SPICE simulations

for access to corner runs, parameter sweeps, nested

loops and MC analysis. (click pic to enlarge.)

This runtime monitor lets me see what jobs are queued, running, failed, or

completed -- and it's organized by how I have set up the experiments (i.e.

specific circuits, specific measures, or my variation objectives.)

Cadence ADE doesn't do this. The only way I could get such functionality

would be to create spreadsheets by hand myself.

3. Automatic circuit-specification checking with flexible reporting

ACE supports a designer creating various types of measures and an extensive

set of post-processing functions tailored to AM/S circuit engineers. For

example, 2-dimensional checking for certain types of circuit specifications

as well such as receiver operating characteristics (ROCs). This ability to

quickly specify and then check performance-vs.-specification is essential.

This reporting isn't possible in Cadence IC5 nor Cadence IC6 ADE-L.

4. Distribution analyzer for visual analysis of variation effects

The main reason I evaluated Berkeley ACE was its ability to systematically

do variation analysis. It means I can use the same procedure/process

steps for every circuit every time.

The distribution analyzer is the only capability I know of that enables

circuit designers to quickly plot and analyze their results for any measure.

Both Cadence versions IC5 and IC6 do not have this analyzer.

Your results from the SPICE simulations can be plotted to show how they vary

per iteration. Circuit designers can see histogram-based distributions for

any set of measures with little effort. Being able to compare a mix of

variation results like Monte, Sweep, Corners on the same plot is powerful.

Fig 1. ACE Console for setup and launching SPICE simulations

for access to corner runs, parameter sweeps, nested

loops and MC analysis. (click pic to enlarge.)

This runtime monitor lets me see what jobs are queued, running, failed, or

completed -- and it's organized by how I have set up the experiments (i.e.

specific circuits, specific measures, or my variation objectives.)

Cadence ADE doesn't do this. The only way I could get such functionality

would be to create spreadsheets by hand myself.

3. Automatic circuit-specification checking with flexible reporting

ACE supports a designer creating various types of measures and an extensive

set of post-processing functions tailored to AM/S circuit engineers. For

example, 2-dimensional checking for certain types of circuit specifications

as well such as receiver operating characteristics (ROCs). This ability to

quickly specify and then check performance-vs.-specification is essential.

This reporting isn't possible in Cadence IC5 nor Cadence IC6 ADE-L.

4. Distribution analyzer for visual analysis of variation effects

The main reason I evaluated Berkeley ACE was its ability to systematically

do variation analysis. It means I can use the same procedure/process

steps for every circuit every time.

The distribution analyzer is the only capability I know of that enables

circuit designers to quickly plot and analyze their results for any measure.

Both Cadence versions IC5 and IC6 do not have this analyzer.

Your results from the SPICE simulations can be plotted to show how they vary

per iteration. Circuit designers can see histogram-based distributions for

any set of measures with little effort. Being able to compare a mix of

variation results like Monte, Sweep, Corners on the same plot is powerful.

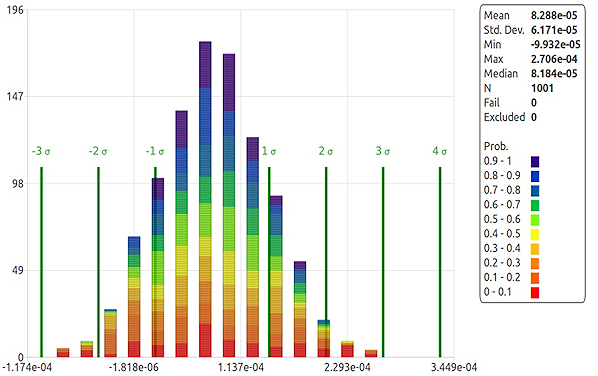

Fig 2. ACE Visual Distribution Analyzer with 1000 iterations

showing all required probabilities and sigma boundaries

(click pic to enlarge.)

BDA ACE's distribution analyzer also gives me a couple of unique things that

help me better understand what variation does to circuit behavior:

- It displays the worst-case confidence-interval impact.

- It defines the confidence interval bands for each measure based

on the number of SPICE iterations that have been run.

This is very important because we have to start using statistical confidence

intervals to guide us on how much simulation we must run for each specific

measure -- and no other tools give us this type of guidance.

Based on the characterization experiments ran, I can see where the variants

and specifications fall relative to the sigma markers. ACE handles Gaussian

and non-Gaussian distributions. For a given set of specifications, the ACE

distribution analyzer can also give you worst-case expected yields.

Fig 2. ACE Visual Distribution Analyzer with 1000 iterations

showing all required probabilities and sigma boundaries

(click pic to enlarge.)

BDA ACE's distribution analyzer also gives me a couple of unique things that

help me better understand what variation does to circuit behavior:

- It displays the worst-case confidence-interval impact.

- It defines the confidence interval bands for each measure based

on the number of SPICE iterations that have been run.

This is very important because we have to start using statistical confidence

intervals to guide us on how much simulation we must run for each specific

measure -- and no other tools give us this type of guidance.

Based on the characterization experiments ran, I can see where the variants

and specifications fall relative to the sigma markers. ACE handles Gaussian

and non-Gaussian distributions. For a given set of specifications, the ACE

distribution analyzer can also give you worst-case expected yields.

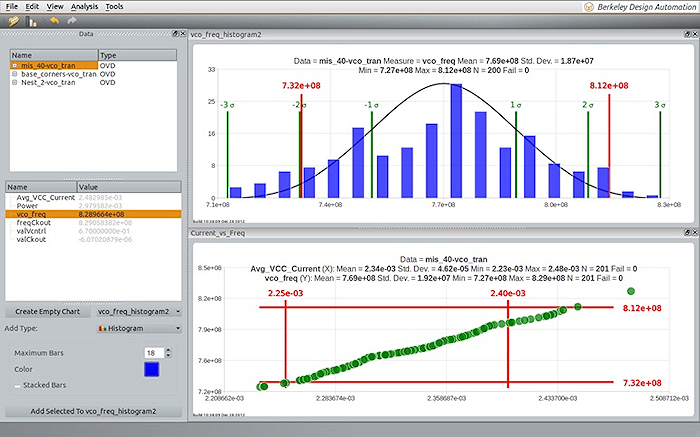

Fig 3. ACE Distribution Analyzer for detailed analysis reporting

(click pic to enlarge.)

Most standard Monte Carlo SPICE simulation runs do not compute iteration

probabilities, so we normally assume that each iteration has an equal

weighting. However, we've found that is not the case. It completely

depends on the measure (like for example aperture jitter or SNDR) and the

underlying statistics of the measurement (which are very different for

these two).

ACE displays MC iteration probabilities. This corresponds to the likelihood

that a given Monte Carlo iteration will occur relative to all other possible

iterations. This is a great feature because it helps us quickly identify

specific cases where I may need to investigate the design further. In ACE,

I can take any specific case for further investigation, create a specific

corner automatically, and then focus on studying the circuit sensitivity and

use this specially created corner.

---- ---- ---- ---- ---- ----

OUR 28nm DESIGN FLOW WITH BOTH BERKELEY-DA AND CADENCE

- We use Cadence IC6 ADE-L to perform the transistor sizing as

well as designing the analog circuit. This includes verification

of some of the common worst-case corners.

- We then leave Cadence and use BDA ACE to fully characterize the

design across PVT (process, voltage, temperature), Monte Carlo

and other impairments like bias current variations.

Thus we have one best-in-class tool flow for 1) design, 2) verification, and

3) characterization.

We use this flow for small sub-circuits as well as for big top-level designs

with more than 700 K active MOS devices.

Also, we use it for post-layout SPICE runs with several million additional

interconnect R's & C's. Here we take advantage of ACE's multi-core parallel

simulator capacity, to distribute our SPICE simulation runs across several

servers, each using multiple CPU cores for our characterization sweep.

- This gives us fast circuit design characterization with high

throughput even for large designs with millions of parasitic

interconnect devices.

- If ACE finds a 'failing' condition, in which the design does not

meet our specs, the designer goes back and resizes the circuit

using the failing test scenario.

After the redesign, we rerun the complete characterization set

again automatically like a regression to prove a specification

conforming implementation.

All the results are directly documented by its automated

performance-vs.-specification reporting tool.

I've heard that BDA ACE works with multiple SPICE simulators, but we've only

used it with AFS with the multi-core parallel option.

- [ Mr. Happy ]

Fig 3. ACE Distribution Analyzer for detailed analysis reporting

(click pic to enlarge.)

Most standard Monte Carlo SPICE simulation runs do not compute iteration

probabilities, so we normally assume that each iteration has an equal

weighting. However, we've found that is not the case. It completely

depends on the measure (like for example aperture jitter or SNDR) and the

underlying statistics of the measurement (which are very different for

these two).

ACE displays MC iteration probabilities. This corresponds to the likelihood

that a given Monte Carlo iteration will occur relative to all other possible

iterations. This is a great feature because it helps us quickly identify

specific cases where I may need to investigate the design further. In ACE,

I can take any specific case for further investigation, create a specific

corner automatically, and then focus on studying the circuit sensitivity and

use this specially created corner.

---- ---- ---- ---- ---- ----

OUR 28nm DESIGN FLOW WITH BOTH BERKELEY-DA AND CADENCE

- We use Cadence IC6 ADE-L to perform the transistor sizing as

well as designing the analog circuit. This includes verification

of some of the common worst-case corners.

- We then leave Cadence and use BDA ACE to fully characterize the

design across PVT (process, voltage, temperature), Monte Carlo

and other impairments like bias current variations.

Thus we have one best-in-class tool flow for 1) design, 2) verification, and

3) characterization.

We use this flow for small sub-circuits as well as for big top-level designs

with more than 700 K active MOS devices.

Also, we use it for post-layout SPICE runs with several million additional

interconnect R's & C's. Here we take advantage of ACE's multi-core parallel

simulator capacity, to distribute our SPICE simulation runs across several

servers, each using multiple CPU cores for our characterization sweep.

- This gives us fast circuit design characterization with high

throughput even for large designs with millions of parasitic

interconnect devices.

- If ACE finds a 'failing' condition, in which the design does not

meet our specs, the designer goes back and resizes the circuit

using the failing test scenario.

After the redesign, we rerun the complete characterization set

again automatically like a regression to prove a specification

conforming implementation.

All the results are directly documented by its automated

performance-vs.-specification reporting tool.

I've heard that BDA ACE works with multiple SPICE simulators, but we've only

used it with AFS with the multi-core parallel option.

- [ Mr. Happy ]

Join

Index

Next->Item

|

|